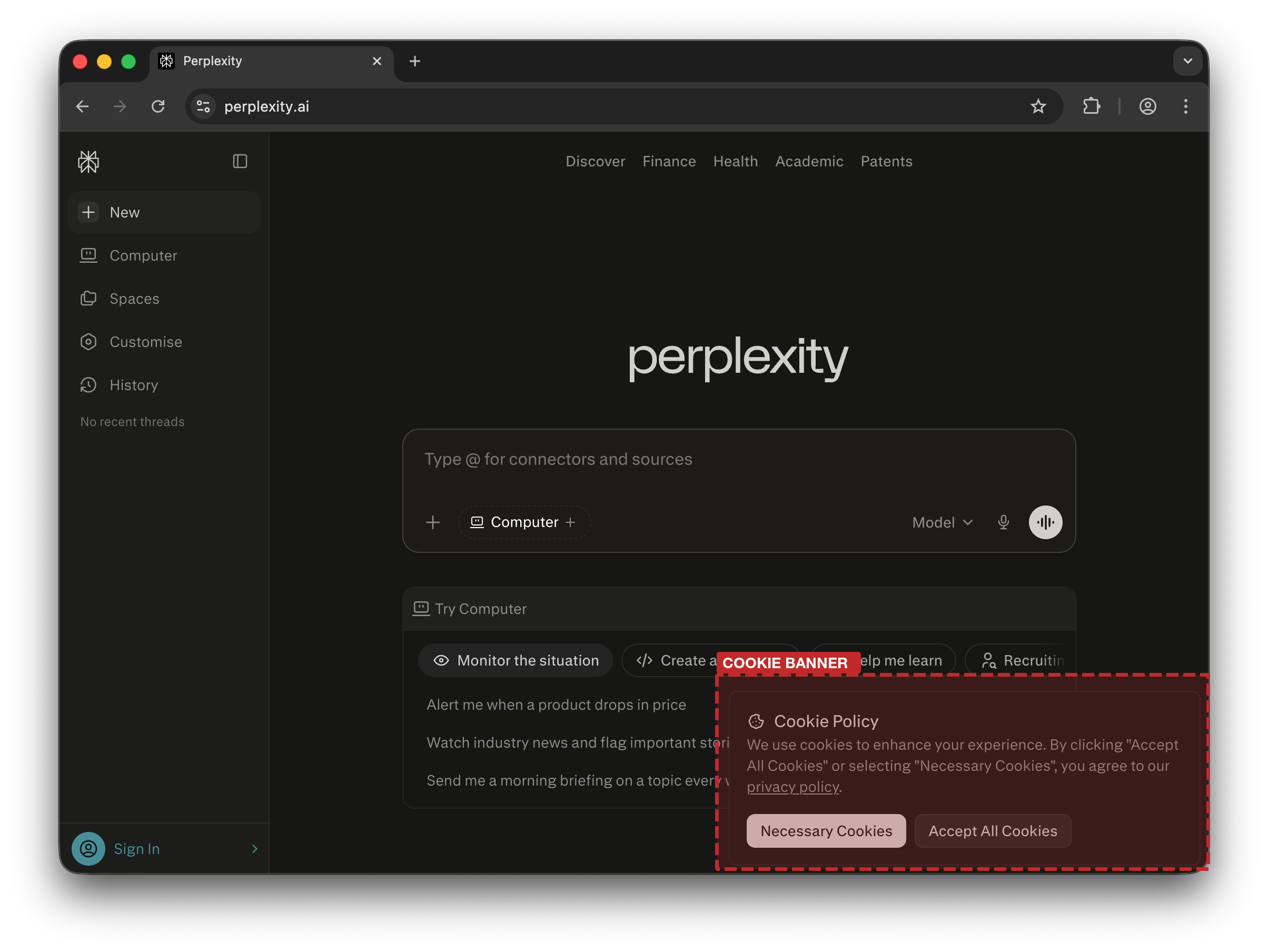

| Perplexity |

Meta |

fbp cookie, conversation URL |

Discontinued Apr 2026 |

› |

Discontinued as of April 3rd, 2026, possibly in response to

US class action.

|

| Perplexity |

Datadog |

Email address (raw), conversation URL, metadata (timezone, device ID) |

Always |

› |

|

The URL encodes the text of the first query of the chat (i.e.,

perplexity.ai/search/QUERY-slug, limited to ~30 chars), disclosing

the intent of the conversation. The slug can be sufficient to access the conversation. User email is

also disclosed during interaction, not only in registration forms.

|

| Perplexity |

Singular |

Email hash, OS and browser metadata |

Always |

› |

|

User email hash is also disclosed when users interact with the service, not only in registration

forms.

|

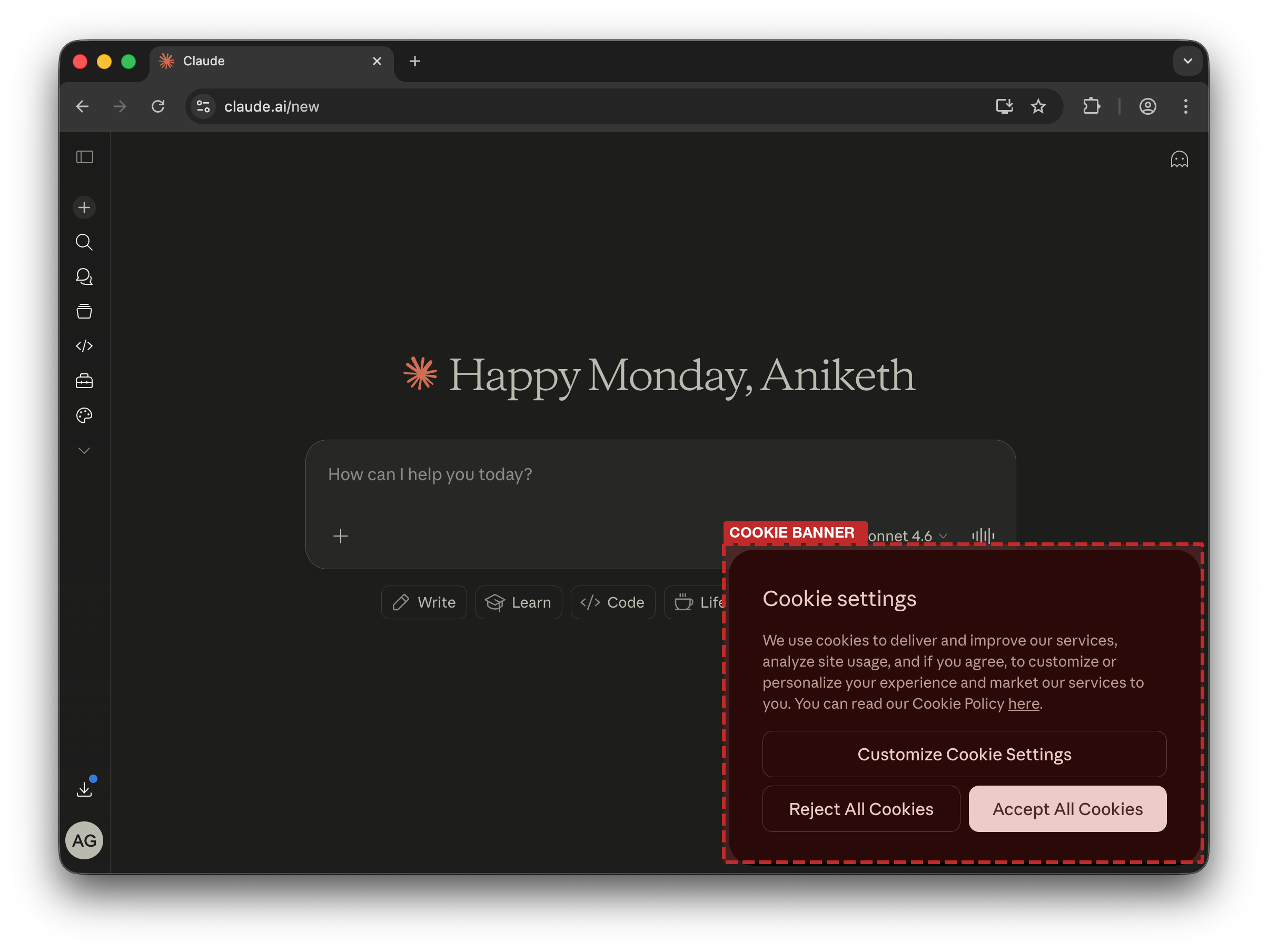

| Anthropic Claude.ai |

Meta |

fbp cookie and browser metadata |

Non-essential cookies accepted |

› |

The

Meta Pixel

loads fbevents.js client-side inside a sandboxed iframe at

a.claude.ai, setting a

_fbp persistence cookie. In parallel, the same event is forwarded

server-side to Facebook Conversions API. Both transmissions share the same Segment

anonymousId, potentially allowing Meta to join client-side and

server-side events under a single user identity. Blocking the client-side pixel does not prevent

Meta from receiving the event via the server-side channel. When non-essential cookies are rejected,

the _fbp cookie is not set and the server-side container forwarding

events to the Conversions API is not observed.

|

| Anthropic Claude.ai |

Intercom |

Email addresses and conversation URL |

Always (authenticated) |

› |

|

A persistent WebSocket to nexus-websocket-a.intercom.io sends the

current page URL every ~2 min., giving Intercom a timestamped log of every conversation the user

visits — sufficient to identify and access the specific conversation. This flow fires

unconditionally on every authenticated session. Disclosing email, name, subscription tier, and

organisation UUID to a third party in sessions where the support widget is never opened may infringe

the data minimisation principle under Article 5 GDPR.

|

| Anthropic Claude.ai |

Datadog |

User anonymous ID, viewport data, page URL (with chat GUID), usage statistics and metadata |

Non-essential cookies accepted |

› |

|

Only observed when accepting non-essential cookies.

|

| Anthropic Claude.ai |

Server-side ×11

|

User email, account UUID, subscription plan, page URL (incl. conversation UUID),

Segment

anonymousId,

Amplitude

session ID, country

|

Non-essential cookies accepted |

› |

|

Claude uses Segment analytics fetching its configuration from

a-cdn.anthropic.com (first-party), which includes the Facebook

Pixel PII config. Anthropic explicitly whitelists email,

userAgent, and country; and

blocklists conversation title, account_uuid,

organization_uuid, billing_type,

surface, and version. Proxying

through a first-party domain means hostname-based ad blockers targeting

api.segment.io will not intercept this traffic.

The Conversions API configuration loaded from a-cdn.anthropic.com indicates that user events are configured to be forwarded server-to-server from Anthropic's infrastructure to

eleven trackers: Facebook Conversions API, LinkedIn Conversions API, TikTok

Conversions API, Reddit Conversions API, Google Enhanced Conversions, Amplitude, Iterable, HubSpot,

Pinterest Conversions API, Podscribe, and DCM Floodlight. As this forwarding occurs

server-to-server, it evades ad blockers. Each forwarded event carries two shared identifiers that

could enable ID bridging, so user activities on Claude may be linkable across advertising platforms to a

single Amplitude session without the user's knowledge or consent. If these cookies are eventually

bridged with email hashes, it can facilitate user re-identification and de-anonymization. Only

triggered when users accept non-essential cookies.

|

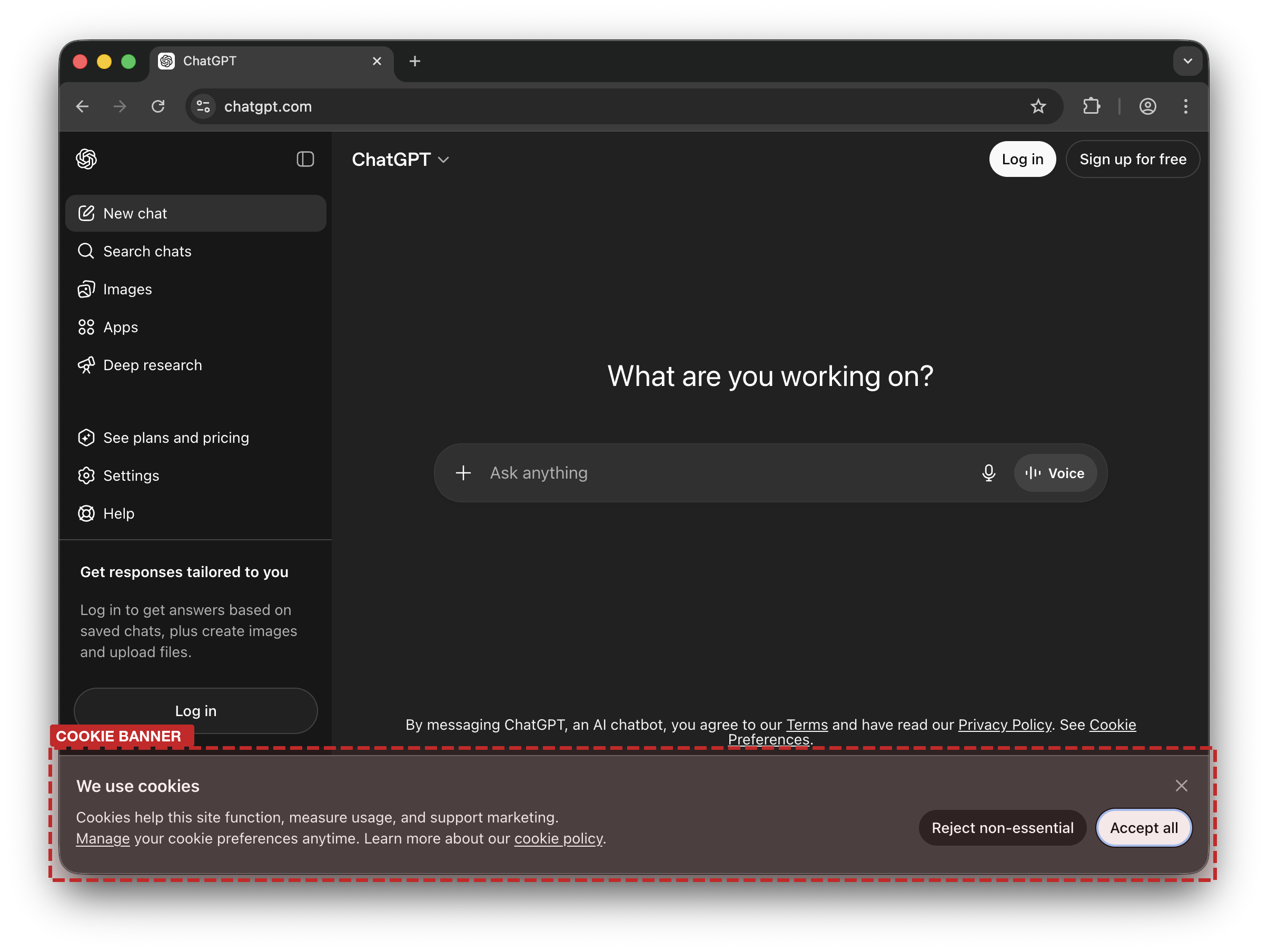

| OpenAI ChatGPT |

Google Analytics |

Conversation URL, page title (chat topic) |

Always (free logged-in) |

› |

|

The chat topic title is transmitted via the dt parameter to Google

Analytics, alongside the full conversation URL in the dl parameter.

This leak only occurs for free logged-in users, regardless of whether they accept or reject cookies.

ChatGPT's Content-Security-Policy header explicitly whitelists numerous third-party ad and analytics

domains (Facebook, TikTok, Google, LinkedIn, Bing, Reddit), confirming the tracking infrastructure

is in place. However, none were observed firing in our experiments — activation may depend on

account type, geography, A/B test cohort, or server-side tracking.

|

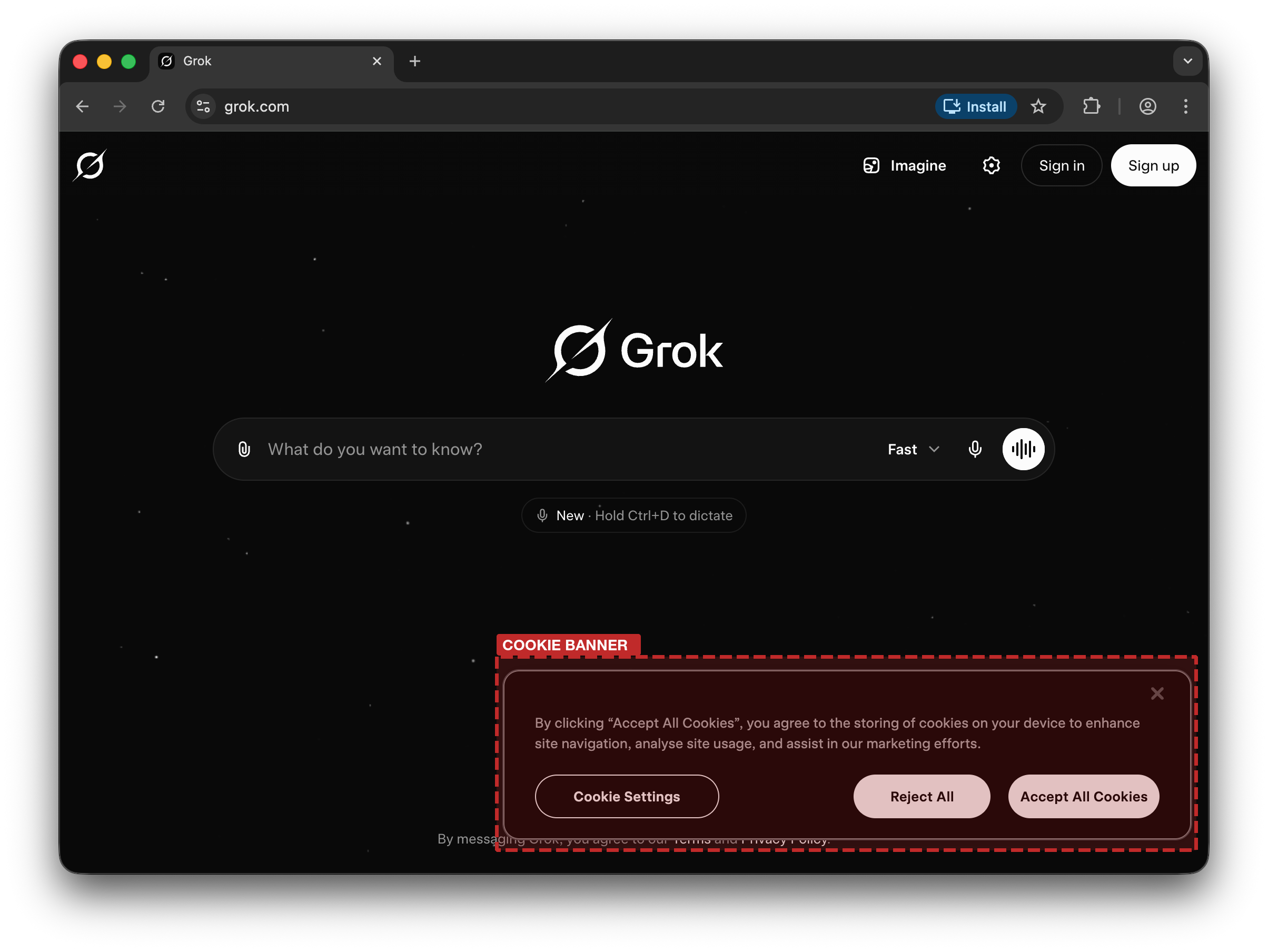

| xAI Grok |

Google Analytics & Doubleclick |

Conversation URL, page title, metadata |

Always |

› |

|

The chat topic title is transmitted via the dt parameter to Google

Analytics and the tab parameter to Google Ads (DoubleClick),

alongside the full conversation URL. The connection to Google Analytics occurs in all circumstances,

regardless of users' cookie consent on OneTrust's forms.

|

| xAI Grok |

TikTok

|

Hashed email, conversation URL, page title, ttp cookie |

Non-essential cookies accepted |

› |

|

context.page.url contains the full conversation URL;

auto_collected_properties.content_data.meta contains the chat topic

title. TikTok collects email hash on account login page and maps it to TikTok cookies. Connection

only occurs after accepting non-essential cookies.

|

| xAI Grok |

Meta |

Conversation URL (incl. conversation UUID), page title, fbp cookie

|

Non-essential cookies accepted |

› |

|

Every URL change triggers a PageView event. Connection only occurs after accepting non-essential

cookies.

|

| xAI Grok |

Server-side GTM

|

Conversation URL, page title, _fbp,

_ttp cookies

|

Non-essential cookies accepted |

› |

|

grok.com embeds a Google Tag Manager container (GTM-TBL6BD7W) configured to route events through a server-side GTM (sGTM) instance. A custom event

sent_3_chat_messages fires after 3 messages in a session,

transmitting the full conversation URL (page_location) and chat

topic title to sGTM. sGTM then forwards this event server-to-server to Meta Conversions API and

TikTok Events API — invisible to the browser and not blockable by ad blockers or privacy controls.

The same payload carries both Meta's fbp and TikTok's

ttp cookies, enabling ID bridging between Facebook and TikTok under

a single user identity. Only occurs after accepting non-essential cookies.

|

| xAI Grok |

TikTok

|

Conversation screenshot image, verbatim message content (via og:image alt text) |

Non-essential cookies accepted |

› |

|

When a Grok conversation is shared, the platform generates Open Graph and Twitter Card metadata for

the share page. This includes og:image and

twitter:image URLs pointing to a screenshot of the conversation,

and og:image:alt /

twitter:image:alt attributes containing verbatim message content.

TikTok's pixel reads and transmits these values — exposing not just the conversation URL and topic,

but actual message text. The screenshot URLs follow a predictable pattern

(grok.com/share/<id>/opengraph-image/<id>) and are

publicly accessible without authentication, meaning any party that receives the share URL could

reconstruct and access the conversation screenshot. Applies to shared (public permalink)

conversations only. Only occurs after accepting non-essential cookies.

|